Recently, Rendered.ai and the Rochester Institute of Technology’s Digital Imaging and Remote Sensing (DIRS) Laboratory announced a collaboration to combine the physics-driven accuracy of the DIRSIG synthetic imagery model with Rendered.ai’s cloud-based platform for high volume synthetic data generation.

Machine Learning (ML) algorithms using Computer Vision (CV) data provide a key tool for exploiting the rapidly expanding capability and content of Earth Observations (EO) collection and analytics companies around the world. Rendered.ai provides a platform as a service (PaaS) for data scientists and CV engineers to scalably produce large, configurable synthetic CV datasets in the cloud for training Artificial Intelligence (AI) and ML systems.

Generating data for sensors that don’t exist yet

Rendered.ai focuses on synthetic data generation for computer vision use cases in which a scenario consisting of sensors, sensor platforms, scene content, and environmental effects are modeled with random variation. This is done so that a variety of CV content, including video, imagery, and 3D models, can be generated for use in AI training.

Data scientists and innovators can control the distribution and characteristics of the scene and scene content to generate data sets for rare scenarios or objects, reference objects, or even to generate data for sensors that don’t exist yet. For example, synthetic data could be created for a future Earth observation satellite system to test data processing for the system before the satellite is in orbit.

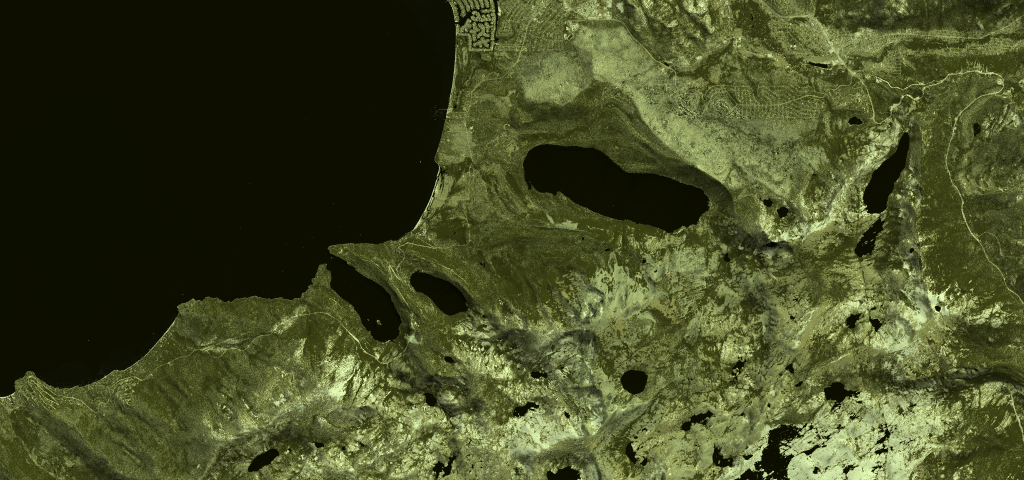

Rendered.ai has built synthetic data applications on its platform for both active and passive sensors such as RGB, panchromatic, IR, and synthetic aperture radar (SAR). It provides subscribers with access to these applications. With the DIRS Laboratory collaboration, the company will be offering hyperspectral simulation and a variety of other capabilities; it is regularly engaging with more companies who are interested in porting their simulation capability to the platform.

Combining DIRSIG’s synthetic imagery model with Rendered.ai’s platform

The DIRSIG model produces a range of simulated output representing passive single-band, multi-spectral, or hyperspectral imagery from the visible through the thermal infrared region of the electromagnetic spectrum. DIRSIG is widely used to test algorithms and to train analysts on simulated standard imagery products. The Rendered.ai team has built simulators for visible light and SAR, however DIRSIG’s breadth of capability and ongoing investment by granting agencies will provide qualified Rendered.ai customers a much wider range of field-tested and production-quality sensor modeling technology.

DIRSIG is a mature, government-funded toolkit and application for generating a variety of synthetic sensor content across the electromagnetic spectrum, typically for remote sensing use cases. The primary users today have been government users and Earth observation researchers. With so many EO satellites going up in the next decade, estimates are in the thousands, there is increasing opportunity to combine DIRSIG with a platform like Rendered.ai to allow more users to generate data and to allow existing users to generate larger datasets using the cloud.

The initial project with DIRSIG and Rendered.ai is being developed for a confidential lighthouse customer with the goal to make the basic integration available for organizations through a synthetic data channel, a purpose-built synthetic data application, within the Rendered.ai framework. Rendered.ai and RIT will announce details and requirements for accessing DIRSIG on the platform at a future date.

Additional use cases for synthetic data

Synthetic data can be used to improve AI training for image analysis and object recognition. The NGA SBIR project that Rendered.ai is engaged in with Orbital Insight has demonstrated that synthetic data can be effective for these uses. It’s also possible to generate synthetic data that can test AI for false positives, bias, and other common failures, so synthetic data is useful for more than just training.

While improving object detection is important, one of the bigger long-term questions for the industry is ‘How will we build AI tools for next-generation sensors?” or, for that matter “What do we need from next-generation sensors in the first place?” Synthetic data is the only way to address these important issues.

Synthetic data certainly can represent existing geographical places on the planet. Recently, Rendered.ai joined the Esri Partner Program as a Startup Partner to take advantage of GIS data and imagery as backgrounds and context for synthetic environments.