Xaxxon’s OpenLIDAR Sensor is a rotational laser scanner with open source software and hardware, intended for use with autonomous mobile robots and simultaneous-location-and-mapping (SLAM) applications.

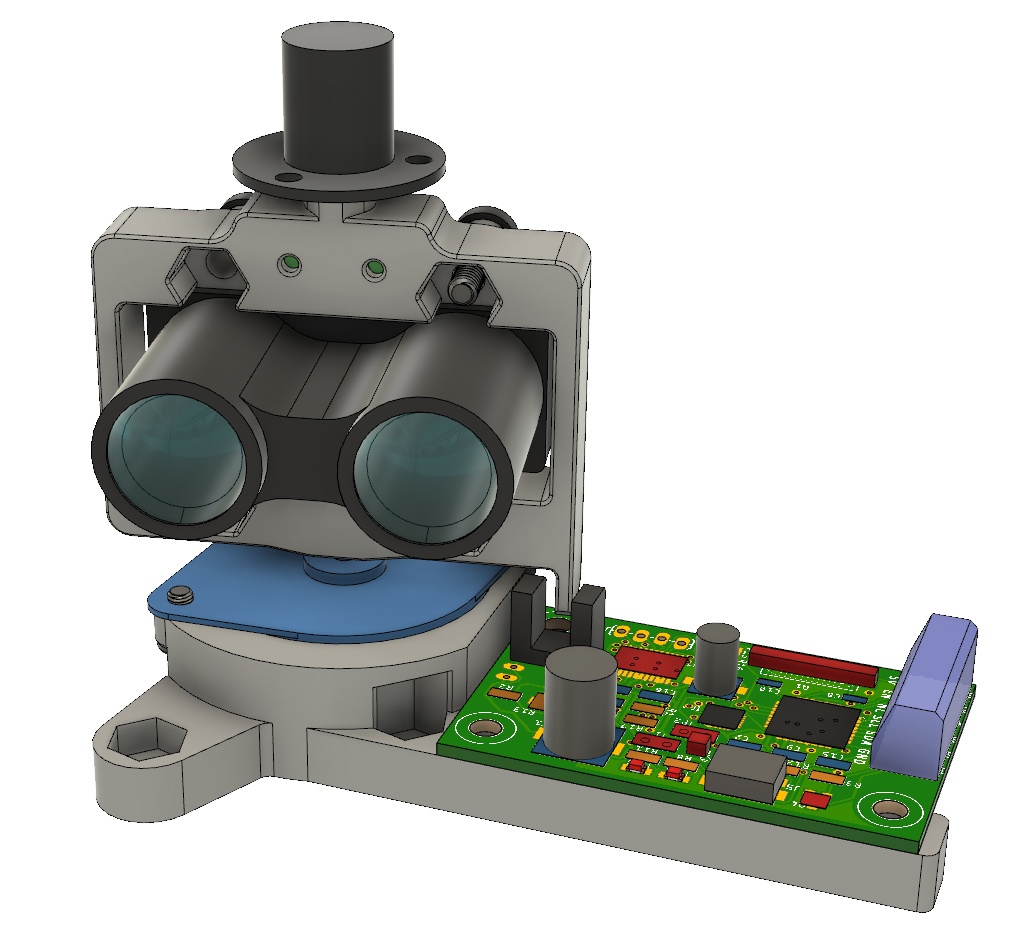

Xaxxon Technologies is a Vancouver-based developer and manufacturer of open source robotic devices. Its most recent offering is a standalone, OpenLIDAR sensor for robotic developers, educators and hobbyists. It consists of a Garmin Lidar-Litev3 sensor that is wired through a rotational slip ring, with stepper motor drive, two 3D-printed frame parts, and an Arduino compatible printed circuit board (PCB). The resulting offering weighs 180 grams and has a maximum range up to 40m, a sample rate up to 750Hz, a resolution of 1 to approximately 2.5cm and a scanning speed up to 250 RPM.

Xaxxon Oculus Prime robot.

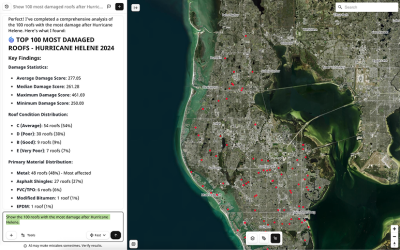

Colin Adamson, owner of Xaxxon, explains that the sensor was originally designed for use with Xaxxon’s Oculus Prime mobile robot, a telerobotic, internet operated vehicle that is capable of autonomous navigation, automated patrols, and security sensing functions. Looking ahead, it is also intended for use with future robots that are currently being developed by Xaxxon, for mapping and localization, path planning and obstacle avoidance.

“We designed our own sensor because nothing available on the market quite fit our needs of low cost, open source, and the ability to adapt the mechanical design to work with the robot chassis design. The stand-alone sensor is targeted at hobbyists, education/universities or anyone needing to add mapping and auto-navigation to an unmanned vehicle or multirotor aircraft.”

Open source and open hardware

Adamson explains that all of Xaxxon’s products have typically been in the low cost hobbyist realm, which is why they try to keep costs as low as possible. As a hardware integrator, the company is particularly excited by the availability of lower cost sensors, as it simply allows for providing more capable robots.

“I think the characteristic of open source/open hardware is just as important as lower cost, as it gives robot designers the ability to precisely adapt a sensor to their often-very-specific application, and even enhance the software and hardware where possible”.

Until now, Xaxxon has been using low cost depth cameras as Oculus Prime’s main SLAM sensor, because lidars available on the market were either too expensive, bulky, power-hungry, or of unstable supply.

Xaxxon’s OpenLIDAR Sensor is a rotational laser scanner with open software and hardware.

“Low cost depth cameras such as Orbbec and Realsense do the job, but have limited range and field of view. Auto navigation with depth cameras alone works well enough, but making accurate, large maps by manually driving the robot around definitely takes some practice, and a lot of patience. The new sensor makes mapping faster, easier, and more accurate. Localization while auto-navigating is also more robust and accurate, thanks to the 360 degree FOV compared to the 60-90 degrees of a depth camera”.

Although the intended use is autonomous navigation of relatively low-speed, small mobile robots and the sensor can only capture data in a 2D horizontal plane, the ROS LaserScan message can be converted into a point cloud message within 3D space using other ROS packages. If mounted to a motorized tilting-platform, the sensor could perform full 3D scanning. Currently, Xaxxon doesn’t offer that setup, so that would be a DIY situation.

Robotic developer support

The Xaxxon sensor is fully compatible with all versions of ROS, which is an open-source, meta-operating system for robots. ”Currently, the sensor is only used in conjunction with the ROS framework, because ROS is mature software with most of the functionality needed already built in, including high performance auto-navigation packages, mapping algorithms, graphical visualization tools, and the ability to easily incorporate data from multiple sensors of various types simultaneously”.

The sensor can be used with a host PC running the ROS driver to communicate with it via USB, which will continuously broadcast a 360 degree ROS LaserScan message. This message is used as one of the inputs for the ROS navigation system to localize a mobile robot and plan a driving path through a known map. The message is also used by mapping algorithms such as Gmapping, Hector SLAM and Cartographer, to create a map of an area while driving around manually.

Typically, accurate ROS SLAM and navigation requires distance sensor input (lidar or a depth camera) as well as odometry sensors (IMU, gyro or wheel encoders). “Aside from use with ROS, the sensor’s command-set and data output format is quite simple, so it would be fairly easy for someone proficient in writing Python scripts, or any language with serial-over-USB communication hooks to get the sensor to do what’s needed for any application. The sensor’s PCB/microcontroller is Arduino-compatible, making modifications to the firmware straight forward”.

Oculus Prime accessory version

Xaxxon already announced the upcoming accessory version of the sensor, which allows a customer with a stock Oculus Prime robot to remove the Orbbec depth camera, snap the lidar in place, then re-attach the depth camera. “The lidar has the exact same hardware and firmware as the stand-alone version, with a different outer frame. We’re finishing an Oculus Prime software update that allows the lidar to be used for mapping and localization, and the depth camera to be used for detecting and avoiding obstacles that are closer to ground level, or anything below the horizontal plane of the lidar, that would be otherwise invisible”.

The version shown above is an example of taking advantage of the simple mechanical design and ‘open hardware’ nature of the sensor, which is intended to be mounted entirely within the chassis of a new robot that is being developed, where the upper frame of the robot performs the task of upper pivot/supporting the slip ring, and routing the range-sensor wiring. The firmware and ROS driver software are identical to the stand-alone version, while the rotation photo-sensor is mounted on top of the PCB in this case, versus the stand-alone sensor that has it mounted on the bottom.