How do you digitize 137 million pieces of a collection? Two guys are working on that.

WASHINGTON – Most people know the Smithsonian for the National Museum of Natural History here. And that is, indeed, an enormous part of the Smithsonian’s work. But the organization maintains a total of 19 museums and galleries, plus the National Zoological Park, with a total of some 137 million specimens and objects (not to mention 1.5 million books).

Because the collection is so vast, only about two percent of it is on display at any one time. Wouldn’t it be great, though, if the power of 3D data capture could bring a much greater portion of that collection to the public through the power of the web?

Well, that’s what Vincent Rossi and Adam Metallo are working on, right now. Both came to the Smithsonian with backgrounds in fine art as model makers, charged with putting together the fairly traditional exhibits that bring history to life for so many visitors. Then they got a grant for a 3D scanner, with the purpose of pairing it with a 3D printer and making better models.

“Then we quickly realized there are many needs around the institution,” said Rossi in an interview with SPAR, “and not just the exhibits. That’s where we started connecting with researchers and it just grew as a grassroots effort.”

Hence their new titles a little more than a year ago as 3D digitization coordinators in the Digitization Program Office. “Our mission,” Rossi said with something of a grim determination, “is to digitize these huge collections in 3D – everything from insects to aircraft. Our day-to-day job is essentially trying to figure out how to actually accomplish that. How do we take 3D digitization and take it to the Smithsonian scale? We’re at the ground floor of trying to understand that.”

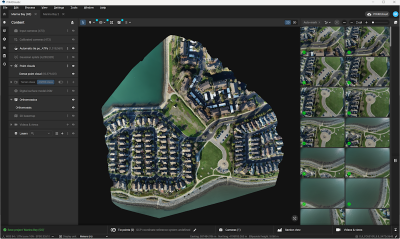

So, while the pair do spend about half their time carefully using laser scanning, photogrammetry, CT scanning and any other technology that might cross their path to digitize any number of objects in a given week, they spend the other half of the time as evangelists for both the technology and their work at the Smithsonian, looking for buy-in both within the institution’s 4,000 employees and from any corporate partners who might want to lend a hand.

In the short term, that means they’re trying to put together point clouds and models of a diverse set of objects from within the collection, showing off the power of what 3D data capture can do for the Smithsonian’s diverse constituency: researchers, curators, conservators, the public, educators, all the folks who want increased access to what the Smithsonian has.

Some of the potential benefits are pretty obvious. Rossi noted, “the primate bone collection is basically being locked down” until the Smithsonian can get a handle on how to divvy out access. “You’d have researchers taking caliper measurements over and over again at the same points,” he explained, “and they’d actually wear away the bones.” If those measurements could be taken by anyone in the world via the internet using exact 3D replicas of those bones, that would clearly offer a tangible benefit.

3D data capture’s benefits were driven home late last year, in fact, when Rossi and Metallo took a quick trip to Chile when workers widening the Pan-American Highway came across an unexpected “whale graveyard” for which their was no hope of preservation. They captured five whales in 3D, earning them write-ups in Nature and National Geographic, and preserving for posterity information that may prove useful to scientists in ways still unknown. They even used 123D Catch and a Z Corp printer to make 1:24 scale models of some of the whale fossils.

You can see a time-lapse video of their work in Chile here:

There are plenty of barriers in their way, of course. They still haven’t found a great way for displaying objects in 3D on the web, though they’re excited about the prospects of HTML 5 and its native 3D support. They’re happy with ASCI text files as a simple way to collect a point cloud, but once they start getting into polygons and models they’re concerned about proprietary file formats and how those will age. There is the issue of how to couple information about color accuracy and geometric accuracy for transmission to future users of the file.

“We can attach a known accuracy for geometry,” said Metallo, “and a known accuracy for color. When someone works with that object and wants to know what the color correction process is, we have to supply that information. But that’s typically in a more proprietary file format.”

And then there is the problem of the holes. “You’re never going to get 100 percent 3D capture,” Rossi said, “but if you need a 3D print, you need a water-tight solid, and many softwares use algorithms to complete the missing geography. If that’s your archivable data, someone could be investigating an object 30 years from now, and if they’re making decisions on geometry that may or may not have been there, that could be a problem.”

In the face of 137 million things to scan, though, finding problems isn’t all that difficult. “The one resource we have plenty of is amazing content,” Rossi said, “and along with that comes frustrating problems for us, but they’re potentially interesting problems for the industry. Those are the connections we’re hoping to make at a conference like SPAR.”

You can see Rossi and Metallo present on their work at the Smithsonian at SPAR International 2012, April 15-18, in Houston. Learn more at their YouTube Channel and on their Facebook page.