It has been a dream of Planet CEO Will Marshall to make the earth searchable as if it were the internet. To do this, objects visible on satellite imagery need to be classified and indexed into database records that can be accessed and manipulated. While satellite imagery providers use AI to help with object detection, turning such big datasets into insights in a user-friendly way is still a challenge. One great step ahead in this process was presented by satellite imagery provider Planet during its most recent user conference last April where the company showed a browser-based user interface that lets users query Planet’s earth datasets just like you would use any search engine.

ChatGPT integration

The following tweet from Planet employee Andrew Zolli, present at the Planet User Conference, gives some more context about the technology behind the new user interface:

My friend @kw shows off “queryable California”, a demo combining @planet planetary variables data and #chatgpt / LLM #ai, developed with our AI for Good colleagues at @Microsoft. A vision of what may come! :-) #planetexplore2023 pic.twitter.com/2yYZSoM0ie

— Andrew Zolli (@andrew_zolli) April 12, 2023

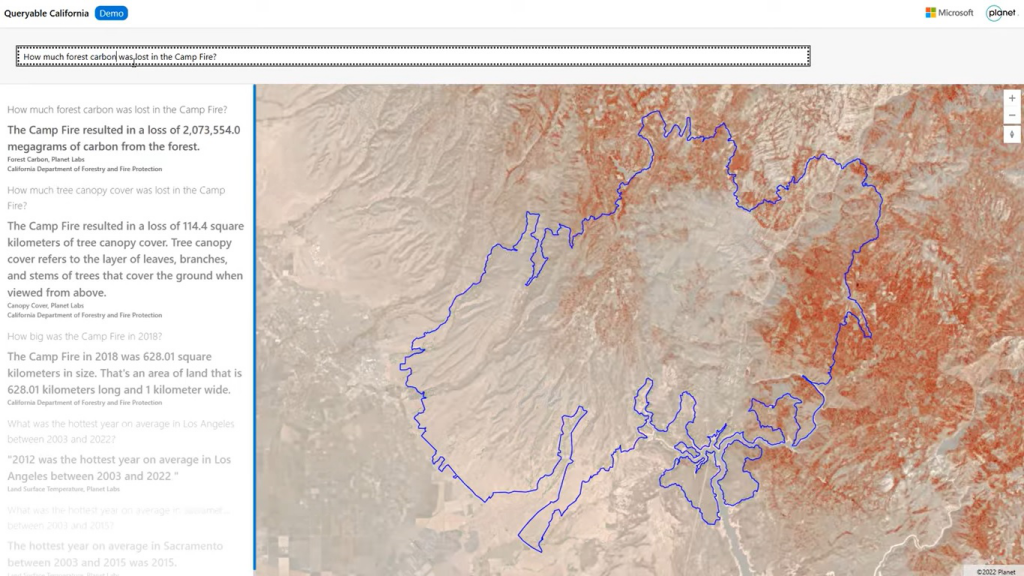

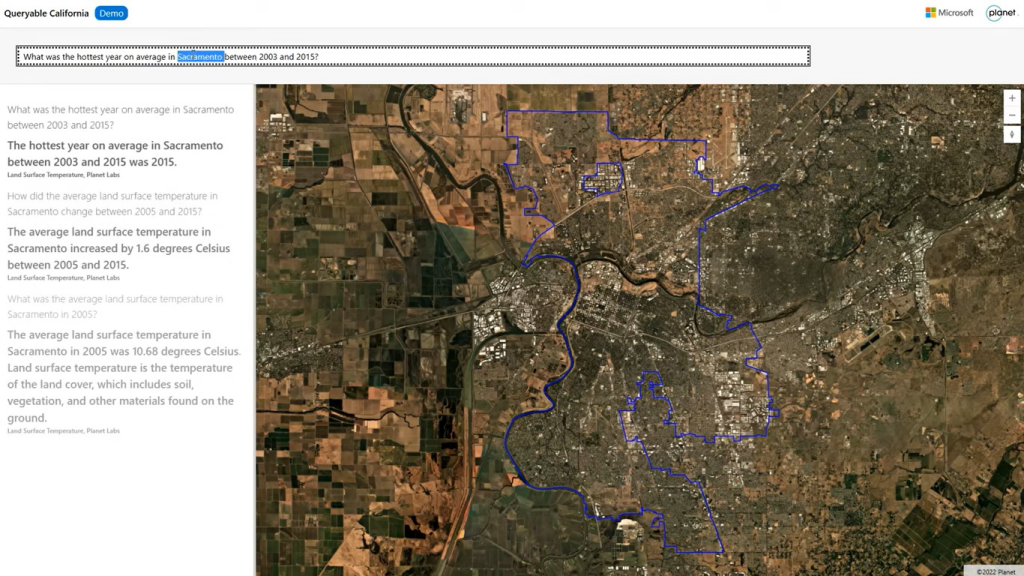

To see the interface in action, have a look at the video below that shows how a user enters questions such as “How did the average land surface temperature in Sacramento change between 2005 and 2015?”, and have the application return a text-based answer accompanied with a satellite image of the area of interest and a related data layer. Other search queries used are “What was the hottest year on average in Sacramento on average between 2003 and 2025” and “How much tree canopy cover was lost in the Camp Fire?”

While the video title reads “Queryable Earth: combining satellite imagery and next-generation AI”, the satellite imagery showing the area of interest is not the most interesting feature here. The real innovation is the integration of Planet’s data and ChatGPT, an artificial intelligence chatbot based on GPT-3, a language model that uses deep learning to produce human-like text. Planet’s user interface can interpret the search query entered by the user, process data, compute and formulate an answer using Planet’s satellite imagery and Planetary Variables data products, and present that to the user through plain text. That search query is called a “prompt” that serves as the starting point for ChatGPT's response.

Making data and imagery more accessible

When showing this new user interface, Planet made it clear it will not be available anytime soon, but it’s something that has a lot of potential for the future. The proof-of-context project was developed together with the Microsoft AI for Good Lab, an applied research and data visualization lab that combines big data and Microsoft’s cloud technology. In Planet’s own words, the idea behind the project was to make its data and imagery more accessible by both indexing physical characteristics of life on Earth and making them searchable, in plain and easy-to-understand language, with real-world context about location and time, to empower people with data and insights that can guide better decision-making.

Planet has been working with Microsoft on several projects involving satellite imagery and AI. One example is an assessment of the extent of damage to buildings in the earthquake-affected area in Turkey earlier this year. The next-gen AI interface presented during Planet's latest user conference offers data and insights that can be transformational for businesses, academics, researchers, policymakers, and more. It can also inform supply chain tracking, actions to mitigate the effects of climate change, disaster preparedness and response, and food insecurity.