Press Start

Much of my childhood was spent immersed in video games. I spent hours upon hours enjoying all types of games on Nintendo, Sega, and Super Nintendo. Admitting this may show my age, but I know that we all can relate to some era of gaming in which we have spent time (significant amounts of time for some of us!) engaged in the exploration of a world that was created for our entertainment. And I’m sure many of us still do today. My own escapes into the simple bliss of video games have led me to wonder if and how we could make our world of scanning just as captivating and pleasurable.

Some time ago Sean Higgins and I started talking about game engines, specifically some of the testing work I had done with the Unity gaming engine with our scan data and 3D models. He introduced Stingray to me, and since then we have been playing with it in an attempt to see what we could accomplish.

Some time ago Sean Higgins and I started talking about game engines, specifically some of the testing work I had done with the Unity gaming engine with our scan data and 3D models. He introduced Stingray to me, and since then we have been playing with it in an attempt to see what we could accomplish.

[Editor: read through to the end for a collection of videos demonstrating Coco’s final–and impressive–results.]

Just about anyone can figure out how to use a video game.

The Options Menu

At this point, you may be asking, “Why?” Aside from scanning and modeling everything we can get our hands on, we are sometimes asked to provide visualizations and renderings. While these are great ways of showing an area or concept, they leave much to be desired in the way of an active experience. In the past, it has been exceedingly difficult to give end users the same experience of exploring the data in a way that we are required to do as a part of our work while scanning. Even with the data at our fingertips back at the office, it is never the same as being in the actual space. Renderings are completely static to a particular view, and while animations may cover more ground, the path taken is predefined. Games add autonomy, providing the user with freedom to move about and make their own decisions about which way to go. We have made numerous attempts to use our 3D models as material for game engines, but the level of detail that a gamer is accustomed to far surpasses what we can accomplish with the modeling effort associated with most of our projects.

It is our desire to find a simpler way to deliver the full, rich detail of our scans in a format that any device can read and navigate.

Don’t Blow It

Beyond the typical 3D model spawned from CAD/BIM platforms explored from a capable computer, the next best thing might seem to be virtual reality, but, I have yet to find a solution that is easy to share with multiple people simultaneously and does not require some sort of awkward headgear and many hours of work setting up the environment. At this time, not everyone has the disposable income to buy into the luxury of high quality VR, and I am not convinced it is the right medium anyway.

The one thing that seems to be universally accepted and readily available to everyone in this modern world of mobile phones and personal computers is the video game. With the accessibility of inexpensive mobile devices, video games have become one of the first pieces of software we encounter and learn as children, and even the most old-school and technophobic among us can muster the skills needed to play. The advantages of the game app, in contrast to the complex deliverables common to our industry, is that they include easily distributed software updates and online connectivity to data sources and other users, not to mention unparalleled portability.

The one thing that seems to be universally accepted and readily available to everyone in this modern world of mobile phones and personal computers is the video game. With the accessibility of inexpensive mobile devices, video games have become one of the first pieces of software we encounter and learn as children, and even the most old-school and technophobic among us can muster the skills needed to play. The advantages of the game app, in contrast to the complex deliverables common to our industry, is that they include easily distributed software updates and online connectivity to data sources and other users, not to mention unparalleled portability.

Easy as Pong

Video: A demo of a model created in Unity 3D.

We have all dreamt of ways to make point clouds easier for everyone, and while distributing a point cloud with light viewers or online browser based viewers that have simplified controls are currently the best method, they are lacking in terms of a natural and intuitive experience. One of the most common problems I have found is when given the option to partially see and physically pass through walls within the 3D model, the user will do so. After blasting through walls like Juggernaut, a minute later most users are lost and have no idea where they are in relation to where they started. In contrast, a game environment often forces the user to egress by normal pathways while helping them to create their own mental map and subsequently deepening their understanding and appreciation of the space. These constrictions of the game world are accomplished with what are called colliders and game physics, which help to add realism to the environment. Just a few well-placed 3D sounds and lights in a scene can heighten one’s sense of how confined or open a room may be.

The Game Tester

Video: A demo of an interactive model created in Stingray.

For a few years we have been experimenting with Unity, which is a game engine that allows a multitude of content formats and various coding languages to be merged together in the construction of a game and exported to almost every platform you can think of, including all major mobile platforms and web browsers. While we are not fluent in any coding language, we have managed to cobble together a few demo environments by borrowing bits and pieces from the Unity Store and stock Unity content.

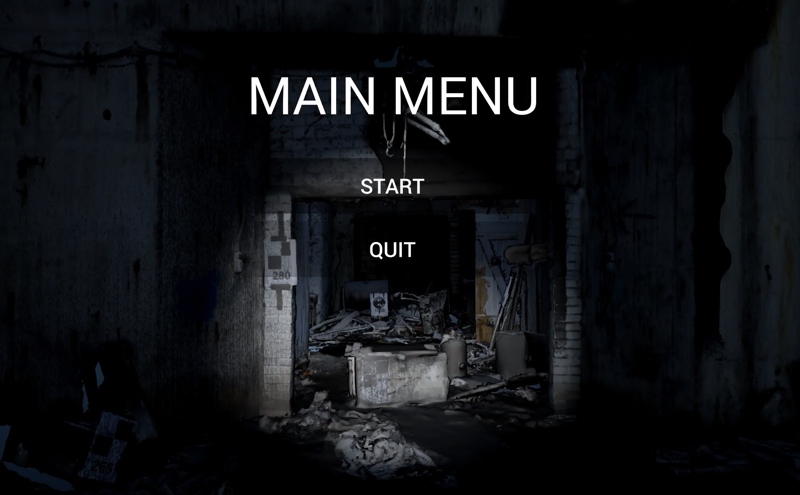

What we are trying to achieve is a simple solution for our clients to explore our scans and models in a more intuitive way. While some of our clients are fluent with CAD software, it is a turn off for others, and they refuse to even try. After a few attempts to import 3D models created from scan data in Revit or AECOsim we succeeded in creating game environments that were more navigable than scan data, but lacked the lush detail inherit to the scans. “It looks like Minecraft” is a phrase we have heard frequently due to the graphical simplicity. Using Stingray brings about better compatibility with Revit and other Autodesk software, but in the end the textures and geometry are often too basic to be noteworthy. We always knew that our final product was never going to be as impressive as a professionally made video game. Have you ever seen how many people are involved in the production of a top-end game? By numbers alone we never stood a chance, but I think that is about to change…

The Cheat Code

Video: An interactive experience generated using Sequioa.

For a while we just about gave up on pushing this any further than the amount of content scoped for our 3D model…then Sequoia sprouted out of nowhere. If you somehow have not heard of Sequoia, it is basically the holy grail of meshing the un-meshable terrestrial laser scan.

For us, Sequoia brings the possibility of completely sidestepping the modeling process for the purpose of creating rich environments to explore and share. Provided an area is scanned adequately, we no longer need to model all the nuts and bolts, and provide tediously Photoshopped texture maps to mimic the original color detail. Sequoia makes texturing from the scan’s color imagery a much simpler process, and also helps the user break up the large mesh into more game engine-digestible chunks through its Hacksaw process. While our first tests are far from today’s modern high resolution games, they are much closer to that quality than a typical 3D model. I think in the hands of a skilled game designer these tools could produce amazing results with much less effort. Needless to say, this feels a bit like cheating since we can go straight from scan to mesh to game in a matter of hours.

Credits:

Bunker scanned and provided by Dr. Christian Hesse

Crime house scanned and provided by Eugene Liscio

Textured meshes provided by Thinkbox Team

Here is a quick look at a couple demo projects navigated using the Xbox One controller on PC, sorry there is no distributable game to play on your own…maybe next time.

- Quick Demo: https://www.youtube.com/watch?v=XEkMbYNxQG8

- Full Demo 4k: https://www.youtube.com/watch?v=D6-HkIkRJdY

- Alt. Full Demo Bright Ver: https://www.youtube.com/watch?v=cprebNGpLwo&feature=youtu.be

Other related videos:

- Model Demo in Stingray for comparison: https://www.youtube.com/watch?edit=vd&v=sHerDvyPRzs

- Model Demos from Unity 3D: https://www.youtube.com/watch?v=orGu1OwLaBA

- Point Clouds in Unity 3D: https://www.youtube.com/watch?v=HH_j-Bc-ank