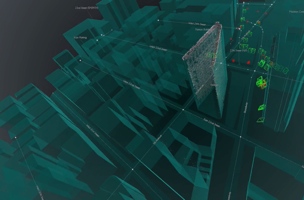

Image source: MapZen

At the end of Batman Begins, Bruce Wayne exploits a technology that taps the sensor’s on all the world’s cell phones as a means of locating the Joker. The technology is scary, but it might also give us an insight into the future of 3D maps.

The idea is actually pretty ingenious. So, as a thought experiment, let’s ask–What could aggregating cell phone data do for the 3D imaging industry? To make it less morally complicated than sourcing data from people who haven’t given consent, let’s say we just send a team out to take pictures of the same building with their iPhones and personal cameras.

Is there a simple, useful way to use their data to create point clouds and update a 3D map? In a blog post at Mapzen, Patricio Gonzalez Vivo put that idea through its paces.

First, he took pictures of the iconic Flatiron building in NYC using different cameras at different times of day. Afterward, he processed them using open-source software to generate point clouds. The point cloud is coherent. So far, so good.

The problem came when he tried to georeference his data. To do this, he took even more photos with his cellphone and used the GPS information embedded in the photos. With this data, he was able to extract the centroid, approximated scale, and base rotation “to level the cameras over the surface.”

Here’s the tough part: He had to make the final adjustments by hand because of the urban canyon effect. If you’ve ever tried to georeference your data while working in a city, you’ve probably run into this problem. Because the GPS satellite signals “bounce between buildings,” this caused “incredible noise in the GPS data” that he tried to use.

With all that, Gonzalez Vivo managed to generate a pretty good model of the Flatiron building in NYC. Then he used open source software to convert the whole thing to a mesh, then to polygons.

Using freely available software and a bunch of cell phone photographs, he updated his map. He had “crowdsourced” photography and answered the question, “what if people could contribute to open source 3D maps just by taking and shareing photos.”

There are obviously a lot of kinks with the process still, but it works. There are plenty of ways we might use something like this in the future–imagine if Apple asked its users to opt-in and allow their photographs to be geo-tagged and used for updating the 3D models in the Map app?

Though the moral ground may be rocky, it’s possible (and even likely) that we’ll be crowdsourcing 3D models from public data before we know it.

For more technical details, refer to the original post.