This image was synthesized by a computer.

Photogrammetry has been around a while, and it has proven to be especially useful in the age of the UAV. Fly around a building or a bridge, snap thousands of pictures, and then plug them into a machine that will build a 3D model. It has stood the test of the time because it offers reasonably precise measurements and it has become easy to perform with new software solutions on the market.

What if you need a virtual reality model for presentation purposes, but you only have photographs and didn’t get complete coverage of the environment? Wouldn’t it be great if you could have a computer fill in the blanks? Researchers at Google have developed a machine-learning interface that can do just that. Meet DeepStereo.

The technology was developed to fill in the blank spaces between sequential Google Street View shots to create convincing videos, and it works shockingly well. But that’s not all it can do–John Flynn and the other Google researchers say it can be used for creating convincing virtual reality models from 2D shots and adding a third dimension to 2D film footage.

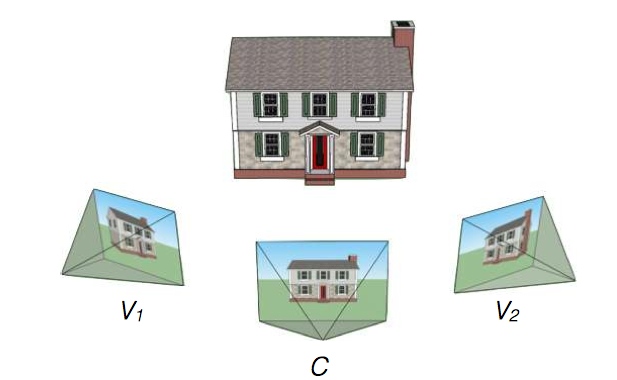

According to a paper the team published, DeepStereo works by synthesizing “a new view of scene by warping and combining images from nearby posed images.” As illustrated in the figure below, it can take two images of a house that were snapped from 45 degree angles and synthesize an image of how it would look head on.

This in itself doesn’t seem super impressive, as many have done this before. However, most previous solutions to this problem have problems with occluded areas. In these areas, they produce tearing, eliminate fine structures, and show obvious aliasing. In other words, when you look at the pictures they generate, it’s obvious that it was generated by a machine that had reached the limits of its ability.

DeepStereo is different. It is a machine-learning interface that can be trained to made an educated guess for what the missing image would look like. The training is faily simple, too: the team needs only to feed it photographs of similar objects. In the paper published by the team, they trained DeepStereo by feeding it about 100,000 sequences of Street View photographs.

When it came time to test, they removed an image from a sequence and used DeepStereo to generate a new one. The results are eerily accurate, and oddly difficult to distinguish from the real photograph.

One of these images was generated by DeepStereo. The other one is a photograph.

Obviously, there are limitations. Right now the deep networks required to generate the images “would be prohibitively expensive in RAM,” to process. According to the paper, it takes about “12 minutes on a multi-core workstation to render a 512×512 pixel image.”

On top of that, DeepStereo constructs the images using only 96 depth planes, meaning that a pixel is assumed to exist on one of 96 planes positioned at set distances from the camera. This makes any sort of accurate measurement impossible.

Another problem that should be harder to overcome: the machine can’t guess what a surface looks like if it doesn’t show up in any of the inputs. This should be obvious, though, if you want to model the back of a building, you have to take some pictures of the back of the building. Machine learning isn’t psychic.

The last problem, though, could be a big one: the system can learn if you train it, but gathering all the training data could be difficult. One solution, as used by CivilMaps for their own deep-learning automatic machine modeling process, is to gather as many customers as possible and use their data to help train the machine.

If Google were ever to make DeepStereo available to the public, there’s no doubt it would accrue all kinds of training quite quickly, and become weirdly competent at predicting how an object looks in 3D space.

Welcome to the future.