It’s well documented that our bridges and infrastructure require more attention, and our methods for inspecting them are pretty inadequate. The ARIA (Aerial Robotic Infrastructure Analyst) project aims to fix this problem. A collaboration between Carnegie Mellon University’s Robotics Institute and Northeastern, the project is working to automate the entire inspection process by bringing together UAVs, robotics, remote sensing, modeling, and simulation technologies.

The ARIA tean is doing very innovative things in a number of area and there are a number of moving parts in the project. Today I’ll be focusing on the coolest part: their robotic inspection assistant.

Pac-Man + UAV

The ARIA team describes the assistant as an “inspector’s apprentice, learning to accomplish inspection tasks with various levels of autonomy.”

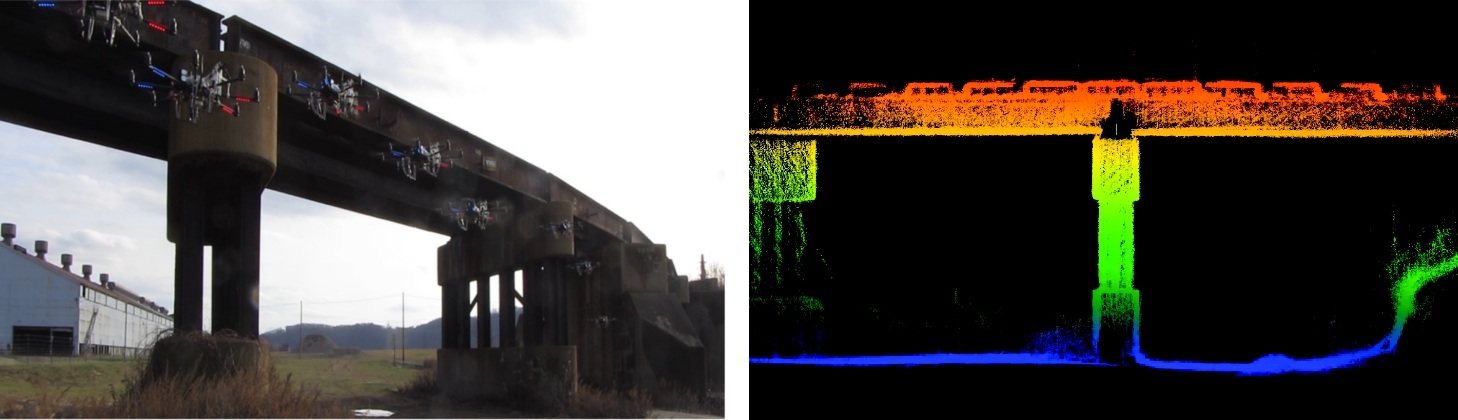

Developing a UAV-based robot that automatically performs infrastructure inspection is a big task. However, the ARIA team has already shown proof of concept using an off-the-shelf UAV, a single-line laser scanner that only gathers 40k points per second, a few cameras, a small Intel computer, and wifi connectivity.

As Luke Yoder, a CMU roboticist on the team explained during his SPAR International presentation, getting a UAV to scan a bridge autonomously presents a number of challenges. For instance, the team needed to devise their own method of locating the UAV when GPS signals cut out below the bridge, a problem they seem to have well in hand using their own particular SLAM (simultanous location and mapping) method. The method, which estimates the UAV’s movement by comparing the movement of physical features between laser scans and photographs, isn’t perfect. However, it seems to be good enough to prevent the vehicle from running into the bridge as it’s scanning.

A more interesting problem: the team needed to figure out how the vehicle could “remember” what parts of the asset it had already been scanned, and whether or not there was anything left to scan.

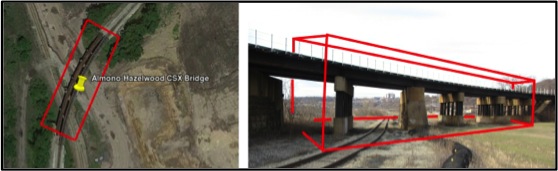

To accomplish this task, they’ve developed a system that can automatically scan and model any structure within an area defined by the user. Yoder explained how the system would work: a worker takes a flying robot into the field, then they open up a GIS program and draw a bounding 3D box around the structure they want to be inspected. Next, the vehicle autonomously and rapidly scans all surfaces within that box.

Remember, Yoder says, “the only information the robot gets is the bounding box. So how do we write software that guides the robot through the bounding box to observe all surfaces?”

ARIA developed an ingenious algorithm that that detects where the scanned areas end and the unscanned area begins. The system then moves the vehicle to the edge of that surface over and over again to keep scanning. It was described to me as being like Pac-Man, continuing to eat all the unscanned space left in the box, little by little.

The system even knows when to stop, Yoder says. “After the vehicle has been exploring the environment, the algorithm can detect when modeling is complete. It can detect if there are surfaces in the environment that have not been modeled or if it was able to reach all surfaces.”

The results are pretty incredible. Note that, though this was only a prototype, the inspection detailed in the video below was completely autonomous and took a total of 5 minutes to complete. No prior model of the structure was needed–the whole process started fresh.

Even though it could someday work totally autonomously, the ARIA team doesn’t intend for their robot to be used that way just yet. In an article they wrote for SPIE, they explained that “the ARIA robot is intended to work interactively with an inspector using planning and controlling algorithms that learn an inspector’s preferences based on observations and then adapt their operation to meet those needs. Such algorithms will enable the robot to act as an assistant or apprentice to the inspector, adjusting its automation level appropriately to the situation.”

What’s Next?

The other parts of the ARIA project (which could make for excellent blog posts on their own), involve using computer vision to aid in the inspection process, the generation of various smart models from point clouds gathered by the UAV robot, and then an immersive environment that allows users to interact with the data and the robot itself.

Maybe the most enticing thing I can leave you with is this vision of the future from the ARIA team:

“Consider this future scenario. An inspector arrives at a bridge site and removes a large suitcase from the trunk of her car. She opens the case and pulls out ARIAbot – a small multi-rotor MAV – and a tablet computer. After a couple of pre-flight diagnostics, ARIAbot takes off. It begins by making a quick pass over and under the bridge. On the computer, a rough 3D model of the bridge begins to take shape. Different components are automatically identified and segmented into objects – footings, columns, beams, girders, and the deck. ARIAbot conducts the standard inspection process, collecting 3D data and close-range imagery of all the critical components. The inspector notices that from the previous year’s report, one of the girders has some significant cracking. She highlights the girder for a detailed analysis. ARIAbot flies in for a closer look at the problem girder, applying algorithms to assess the location and size of the cracks. The result is overlaid on the integrated 3D model and linked to the model from the previous inspection. Flipping between the two models, the inspector can see the crack is getting worse. The inspector requests a simulation, and ARIAbot translates the 3D model into a finite element model (FEM) that incorporates the revised crack detections. The simulation results show that the bridge is safe for now, but estimates that a repair will be needed in a year – several years sooner than planned. Later, back at the Department of Transportation, the results of the inspection are transferred into the Bridge Management System, and the repair is scheduled for the following spring.”

Check out ARIA’s website for more information.