During his #SPAR15 keynote (which I’ve covered here), Dave Truch explained why BP keeps virtual models of facilities all the way through to the operations phase. The benefits of maintaining a model throughout an asset’s lifecycle seem clear–they enable change detection, training, maintenance planning, and more–so why isn’t everyone using them?

The challenges involved in keeping a model updated are great. Point clouds can be simple enough to gather, but you need to process them, identifying objects before they can be used in a modeling or design environment. The industry has developed software that saves a lot of work by automating that task, but there’s still room to improve. Here is a smart solution I saw at SPAR 2015.

What about deep learning?

When I was attending Bentley’s Year in Infrastructure 2014, one of Bentley’s research fellows mentioned that a process called “deep learning” could one day accelerate automatic modeling a great deal. Deep learning uses a cloud network of computers–or one big supercomputer–that can learn how to recognize objects. Google uses deep learning, he said, so I wondered why I had never heard of anyone using it to automatically model from point clouds.

At SPAR, I found out that at least one company uses it. Sravan Puttagunta presented on how his company Civil Maps uses deep learning to create maps from LiDAR data. He started by explaining more about how deep learning works: “Deep learning is similar to how a human develops their brain. We have context, so we recognize patterns in various objects and then we classify those objects based on those patterns.”

At SPAR, I found out that at least one company uses it. Sravan Puttagunta presented on how his company Civil Maps uses deep learning to create maps from LiDAR data. He started by explaining more about how deep learning works: “Deep learning is similar to how a human develops their brain. We have context, so we recognize patterns in various objects and then we classify those objects based on those patterns.”

Using a deep-learning platform, he explained, is different from writing algorithms. “You might spend two months writing an algorithm for a particular asset that you are interested in extracting from the point cloud, but we’re actually not creating algorithms. We’re creating a self-learning system that tries out many different algorithms before it finds the right one.”

That is, deep learning takes auto-recognition a step further into the future–instead of paying a software engineer to write a program that recognizes a certain set of assets, you send your data to a network of computers that “write” that auto-recognition program without any human input. As you might guess, the particulars a little bit complicated to go into here, but for you technical wonks Sravan wrote a great post on his blog about the topic.

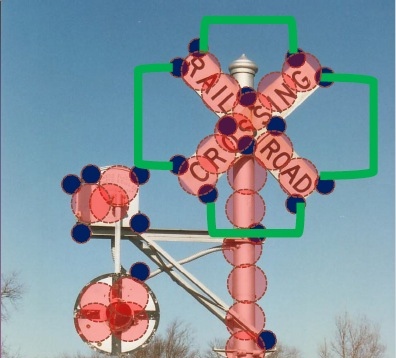

After showing a few case studies where Civil Maps has proved their proved their deep learning technique for recognizing power lines, telephone poles, and so on, Sravan explained that the system gets better at a task with practice. “By uploading and sharing your unorganized 3D data, what you’re essentially doing is contributing to an artificial intelligence that gets smarter over time.”

Maybe someday it will be smart enough to turn your point cloud into a model as you scan.