In my last posting I tried to make a few points about the attribution of features collected via LiDAR. I mentioned that one way of acquiring the detailed attributes of a feature is to read and record the information attached to the object itself in the field, the manufacturers tag. There is more than one way to record that data, of course.

For example, writing the attribute information in a field book works, however, it necessitates later manual input to covert the written data to a digital form. This approach is inefficient and introduces errors that can otherwise be avoided.

Bar coded tags, other types of optically read codes or numbered stickers on the features provide a way around the manual input in the field and the office. However, they are most often used to introduce a key into the data stream. In those cases, the key matches a record or records in a database where the actual attributes of interest have been input previously. This amounts to kicking the can down the road because it again raises the question of how database itself is to be properly populated.

There are several other ways to collect attribute data, but even when that data is finally acquired and in a digital form there is another hurdle: The compiled digital attributes need to be associated to the digital representation of the precise feature to which it applies. These two elements must be joined so that when the feature is queried, it always appears with the correct attributes. Building the data path from acquisition in the field to serving the combination of attribute and scan data to the user can be difficult.

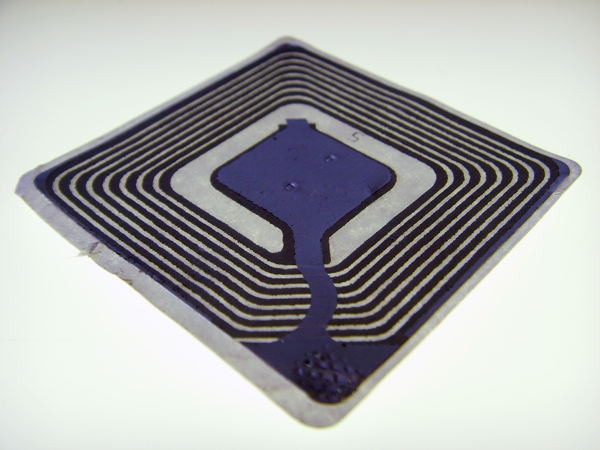

There is an approach that has the potential to solve both challenges by automatically collecting a feature’s attributes and conflating those attributes with the feature’s point cloud. The method starts with the encoding of all the necessary attributes of a feature on optically readable or radio frequency identification (RFID) tags. What follows is the physical attachment of these encoded tags to the features of interest. In both cases, the encoded attributes can clearly be read automatically by a sensor. This solves the collection part of the problem, but the conflation difficulty remains.

One of the most significant advantages to 3DLS technology, when it is properly applied, is its efficiency in producing the correct relative and absolute position of everything in the scan in three dimensions. In other words, the position of every feature is available in the data collected. Therefore, if the 3D position of each encoded tag on each feature is known, it becomes possible to correlate the attributes collected from those tags with the features to which they apply with an automatic routine. Therefore, the challenge is determining the position of every encoded tag.

There are several methods to accomplish this. In outdoor settings, GPS is an obvious option. If it is used for such work, it is important to note that where features are densely packed differentially corrected measurements are needed. In indoor settings robotic total stations can be used to collect the positions of the encoded tags. This arrangement, of course, requires the development of a control network from which the instruments can be correctly oriented.

This is one approach, and there are certainly others. The overriding objective is the automatic attribution of features in 3DLS scans in circumstances where that data and correlation is not already established by other means.