Mobile mapping technology is hugely valuable. Imagine scanning a strip of road, or a mini-mall without it (you probably don’t want to). However, it has one unavoidable truth: a reliance on GPS.

GPS is part of the suite of sensors that a mobile mapping system uses to determine its bearing, its position in comparison to the objects it’s scanning, its speed, and so on. Without detailed information on the scanner’s location and orientation for every point it measures, that information is essentially useless.

You can imagine that taking this scanner indoors, where GPS won’t work, presents problems. Without satellite coverage, how do you know where the scanner is? You use algorithms for simultaneous localization and mapping (SLAM).

What is SLAM?

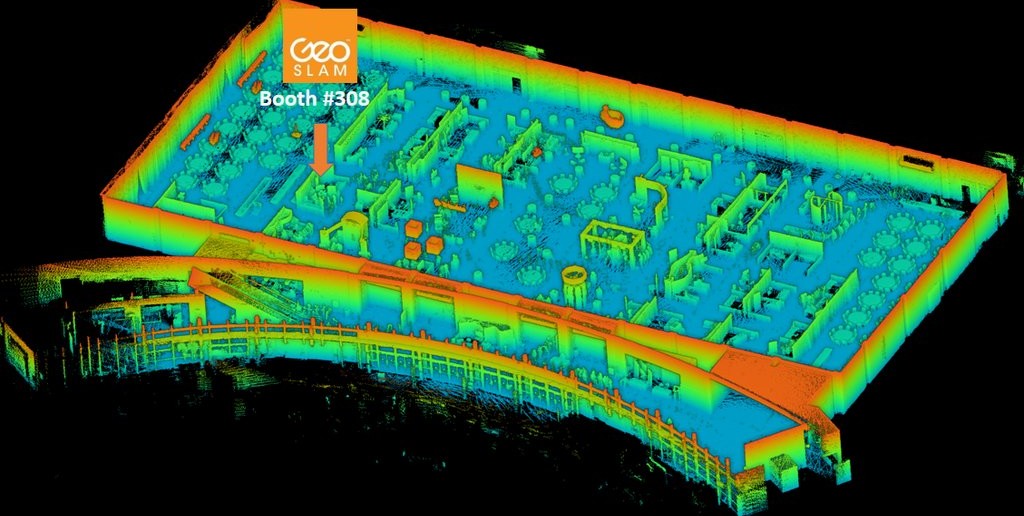

A map of the SPAR ’16 exhibit floor captured with the SurphSLAM.

What is SLAM?

I’ll do my best, as a technology writer (and not a technologist) to explain this. SLAM algorithms enable a device to build a map for its surrounding environment while, at the same time, locating itself within that map–these are two sides of the same coin and you won’t get one without the other. The technology comes from robotics. Researchers wanted to place a robot in a new environment and enable that robot teach itself to navigate that environment, all without accessing any pre-coded information. (For more information, check OpenSLAM’s description here.)

In addition to guiding robots around, SLAM can enable you to map indoors without GPS. There’s a catch: SLAM algorithms are not 100% perfect (for a litany of reasons I can only vaguely understand) at estimating position. The technology does its job beautifully at short distances, but gets less and less accurate the farther you venture from your starting point.

As you might expect, there are solutions to this problem, and those solutions are getting better. SLAM has already gotten good enough to enable new scanning workflows that we wouldn’t have attempted even a few years ago, and it looks like it’s just getting started.

Among recent SLAM solutions, algorithms from Real Earth and GeoSLAM have been getting the most publicity.

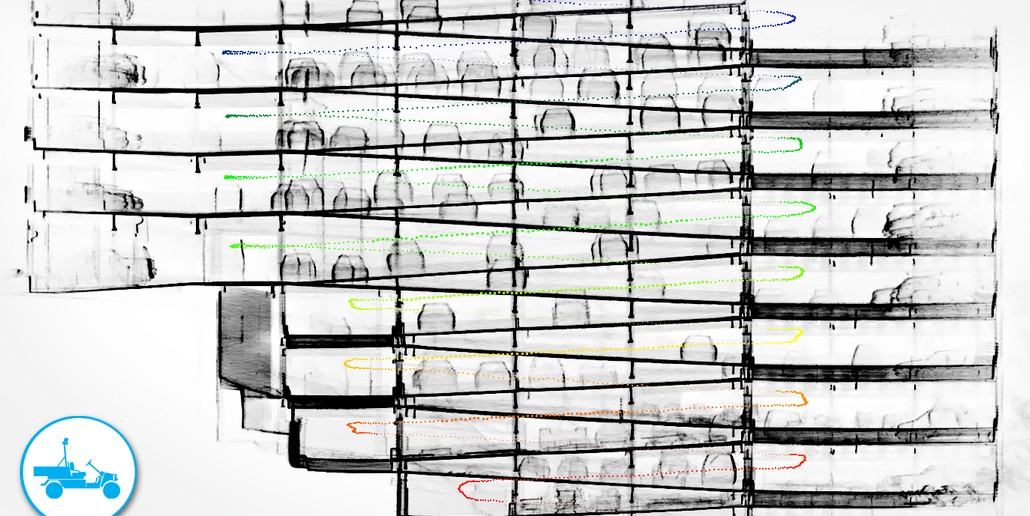

A map generated with Real Earth’s STENCIL.

Real Earth

Real Earth, for instance, just won first place in Microsoft’s Indoor Localization Competition in Vienna, Austria using the SLAM algorithm they coded into their STENCIL handheld mapper.

According to their release from the competition, they did it with style, too, being the only solution to perform real-time indoor localization without the use of “infrastructure.” In this case that means they located 15 markers in a large facility in 15 minutes, all without the use of any external sensors. As compared to a scan of the facility taken with a static scanner, the STENCIL showed a 0.16 m average error.

That makes it accurate enough for some uses, but not others. Still, that accuracy is nothing to sneeze at for a device you turn on with one button and carry around like an ice-cream cone–and it makes the STENCIL another viable tool in the 3D toolbox.

GeoSLAM

Another company to keep an eye on is GeoSLAM. They have a new handheld device of their own, the ZEB:REVO, as well as a collaboration with Surphaser they call SurphSLAM. The SurphSLAM is a small, trolley-based LiDAR system that includes Surphaser’s new model 10 scanner and a tablet running GeoSLAM’s SLAM algorithm. Jonathan Coco tested it out (and loved it).

As Coco explained to me, the SurphSLAM is especially exciting because it allows a hybrid workflow that we haven’t seen anyplace else. The device allows you to walk a space (quite quickly), and stop when you need to take a more detailed static scan. Coco explains that the SLAM algorithm will automatically combine the data for you. That means you’re getting the best of both worlds.

Possible Breakthroughs

What’s next? By many indications, some engineers are hard at work introducing control points to SLAM. This would help to keep SLAM accurate at long distances by providing precisely located landmarks that a device can use to correct for errors, combating the problem of location “drift” as the device scans at larger distances. “With control in place,” Coco told me, “it would allow us to confidently scan enormous structures (buildings, plants, stockpiles, etc.) with a handheld mapping system!”

In other words, Coco says, “I anticipate the usefulness of SLAM will escalate dramatically in the near future.”

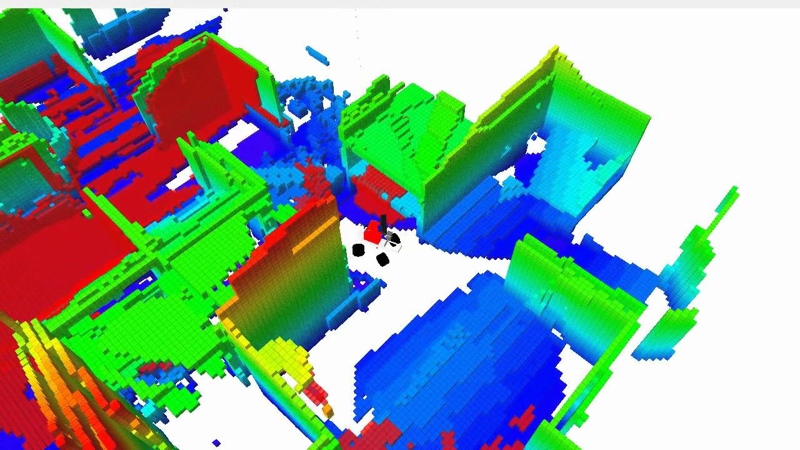

And remember how the technology was developed for robots? Well, imagine applying the SLAM algorithms that these companies are developing back to robots. Now you’ve got an autonomous machine that can explore its environment without human input and map it accurately. I’m sure you could find a use for that.

(Do you have something to say about SLAM? Think I’m full of hot air? Email me and we’ll set the record straight.)

.png.small.400x400.png)