This post is a continuation of our conversation about deliverables. Last week I alluded to both Graphical User Interfaces and Natural User Interfaces and I think those concepts deserve a bit more attention. From the computer punch card to Windows 7 or OS X Lion we have made tremendous advances in reducing the knowledge level necessary to operate a computer. These advances represent the accomplishments of the Graphical User Interface. By giving users pictures instead of strings of code, millions more were able to accomplish complex computing tasks that they would not have completed without a GUI. As we all know, adding those additional users also brought down the cost of computing for us all. Will the Natural User Interface have the same effect? I doubt it. The GUI has been simulating the natural world rather well and the conceptual leap from GUI to NUI is not as great as the leap from code to GUI. However, I do think that NUI’s have the ability to put computing in places it is not today and most importantly it can sharply reduce the learning curve when encountering new applications.

So, just what is a NUI?

The idea is to provide access to data through systems and tools that the user already understands through previous experiences. The most widely seen systems are in video gaming. Let’s take the Microsoft Kinect for example. If you want your on-screen avatar to do something, you do it yourself and the Kinect reads your positional changes and duplicates those movements in the virtual environment. The user does not need to learn how to look left and right; he already knows how to do that. Why should we make him learn another way to do something he already knows how to do? Even if you do not have the abilty to drop your data into a Kinect controlled video game, using a video game engine for site virtualization is a great option. It’s been nearly 35 years since the Atari 2600 was released. That’s over 30 years of experience in our society with systems that are based upon moving through virtual environments with rather similar control interfaces.

Now that we are creating virtual environments, why shouldn’t we use the very systems that were designed to navigate through virtual environments?

While there are various game engines out there, most of my experience has been with the Unity 3D engine. There are a couple of reasons for this. First of all, it’s free. Secondly, game assets are stored as components that are self contained. This means that if you build something (like all of the code necessary to control the movement as a “first person shooter”) you can literally drag and drop it into subsequent environments. Original creations are valuable, but copying and pasting is profitable! There is also a large user base, so that many assets can be purchased from other programmers.

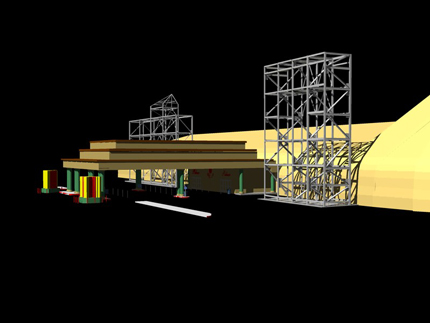

There are, of course, a few issues with getting your data into games. First, don’t use the geo-referenced coordinates. Move everything as close to X=0, Y=0, and Z=0 for faster rendering. Secondly you will need to prepare your model for importation into the game engine. I have had the best luck using the OBJ file format. My current workflow is to take the model (which I typically created in Cyclone) and export it to AutoCAD via the COE format. The COE file is converted to an AutoCAD DWG file. While in ACAD, I perform any cleaning or layering that I may need. Next, the DWG is imported into 3D Studio Max. Any textures or colors are applied in 3DS and at this point I will convert the model to a mesh. Finally, I will optimize the mesh to reduce the number of faces as much as possible without altering the geometry and export the results as an OBJ file. The resulting file is not as attractive as it was in 3DS but with Unity I can export the entire scene to multiple platforms (web, iOS, android, EXE, X-box, Wii, etc.) and my model is now accessible through a user interface that millions know how to operate.

Next week we will continue with some more “non-standard” deliverable formats and look at using Augmented Reality applications to allow users to access your data. For some video examples, check out some posts on my YouTube channel on both Standard Deliverables and Non-Standard Deliverables.